:max_bytes(150000):strip_icc()/dotdash_Final_Exploring_the_Exponentially_Weighted_Moving_Average_Nov_2020-03-d990fb36085148febde6a8aae02eaf27.jpg)

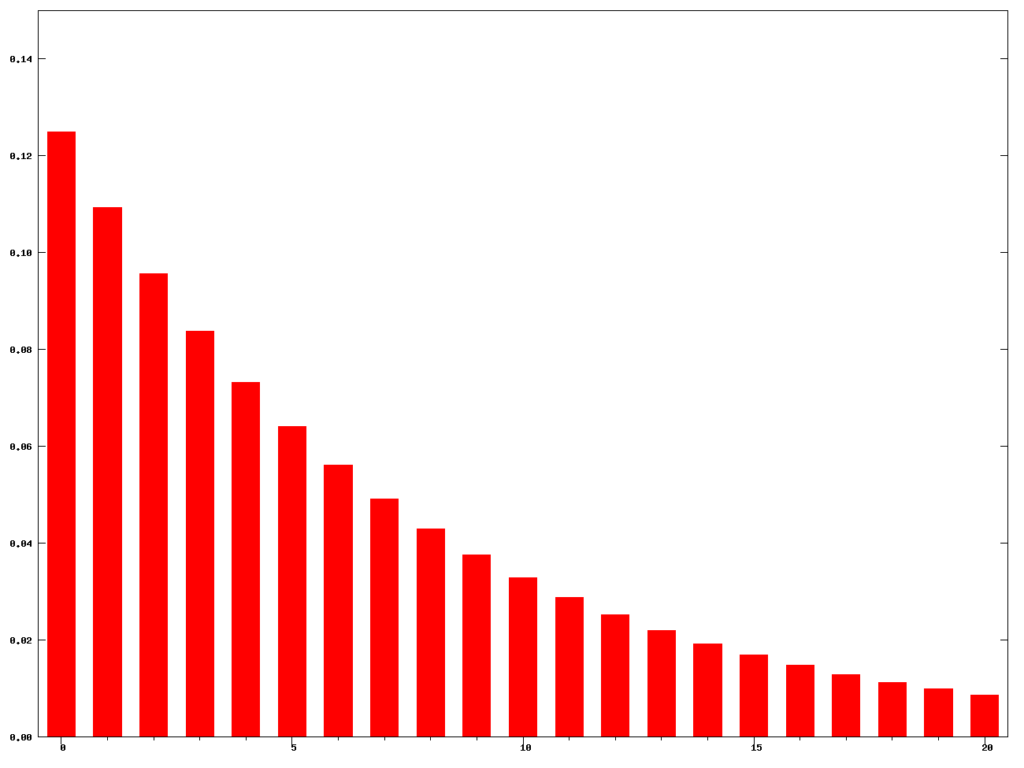

This is a result from elementary statistics: the counts of each face is distributed as It turns out that step #1 in the bootstrap procedure can be thought of as rolling an unbiased $N$ sided dice $N$ times, and counting the number of times each face (index) comes up. Thinking more deeply about the resampling So both the bootstrap mean and the data mean are consistent with the population mean of 0.5 within one standard error of the mean, and the analytical estimator of the standard error is consistent with the bootstrap standard deviation. The quantity of interest in this example is the mean of the data.Ĭompare bootstrap mean 0.477468987332949 to sample quantity of interest 0.477104055796129Ĭompare bootstrap standard deviation 0.0547528263387387 to the sample standard error of the quantity of interest 0.0555938381440978 Here’s an implementation of the bootstrap for this data set. (Just kidding, statisticians don’t go to parties!) That last point is tricky and worth memorizing to impress your statistics friends at parties. This means the mean of the distro is an estimate of the quantity of interest itself, and the standard deviation is an estimate of the standard error of that quantity. The resulting distribution of the quantity of interest is an empirical estimate of the sampling distribution of that quantity. compute your quantity of interest on the resampled data exactly as if it was the original data, and.resample the $N$ data points $N$ times with replacement,.Trivial historam of the counts of data points. This is a trivial histogram: there’s just one of everything. I’m going to start by histogramming the counts of each index. The index identifies the data point, the count is simply the number of times that data point appears, and the value is “the data.” The mean of the data is 0.48 and the standard error on the mean is 0.05. I’ll be using the following R libraries and global settings for the R snippets. In this article, I’m going to assume you’re already a fairly technical person who understands why you’d want to estimate uncertainty on a big data application.Īt the end of this post I’ll set loose a streaming bootstrap on the Twitter firehose, computing the mean tweet rate on top trending terms at the time I ran it, with streaming one-sigma error bands. The streaming distributed bootstrap is a really fun solution, and I’ve mocked up a Python package to test it out.

A Google paper puts together a bootstrap that is both streaming and distributed. The Vowpal Wabbit paper solves the unbounded data problem, but on a single thread. The Bag of Little Bootstraps paper distributes the bootstrap, but issues a standard “static” bootstrap on each thread, so it doesn’t solve the unbounded data problem. There has been lots of exciting new research around scaling the bootstrap to unbounded and distributed data. The standard bootstrap assumes you have all your data locally available, it’s static, it fits into primary memory, and it’s easy to compute your metric of interest (AUC in the above example). The standard bootstrap, however, does not scale well to big data, and for unbounded data streams it’s in fact not well defined. Is your AUC statistically consistent with 0.5? That’d be key to know, and you could estimate it with the bootstrap. If, say, you’re training a model using just 30 training examples, you’ll likely want to know how uncertain your goodness-of-fit metric is. The bootstrap (Efron 1979) is an incredibly practical method to estimate uncertainty from finite sampling on almost any quantity of interest.

The leap to big data: the Poisson trick.Thinking more deeply about the resampling.

#Python exponentially weighted standard deviation series#

To obtain the desired non-central moments, multiply the latter power series through fourth order in $t$ and equate the result term-by-term with the terms in $\phi_Z(t)$. There are well-known on-line formulas for computing exponentially weighted moving averages and standard deviations of a process $(x_n)_(Y) \beta^2 t^2 + \cdots).